Quick Facts

- Category: Robotics & IoT

- Published: 2026-05-07 15:06:12

- China's EV Revolution: Auto Show Insights, Xiaomi SU7 Test Drive, and Home Battery Pilot

- How to Contribute to the Open-Source Warp Terminal with AI Agents

- Unlock Windows 11’s Hidden Xbox Mode: Your Q&A Guide

- Navigating Frontier AI in Defense: A Practical Guide for Security Leaders

- Python Security Response Team Bolsters Ranks with New Governance and First New Member in Over a Year

Breaking: Data Quality Failures Now Driving Costly AI Blunders in Autonomous Systems

Poor data quality is the leading cause of AI project failures, but the nature of those failures is shifting dramatically. In traditional machine learning, errors were visible — a wrong dashboard number could be caught and the model retrained. But with generative and agentic AI, those safety nets vanish.

A pricing model that shipped on flawed data once caused a $2.3 million margin shortfall. A chatbot fed from a stale knowledge base confidently gave wrong answers to thousands of customers. An autonomous procurement agent committed budget on incomplete supplier data before any human could review it. These are not one-off bugs — they are systemic failures of data fitness.

“The AI operated exactly as designed, but on data that was never fit for purpose,” said Dr. Elena Marlow, chief data officer at the Center for Reliable AI. “The further AI moves from prediction to action, the less tolerance we have for data quality failures — and the harder they are to catch before damage is done.”

Background: The Invisible Poison in AI Pipelines

Nobody builds bad models on purpose. Engineers train on datasets that appear clean until hidden defects surface in production. In machine learning, those defects often show up in dashboards — a retail revenue forecast is off, a fraud detection model lets through a false positive. Analysts catch the signal, and someone retrains the model. The damage is contained.

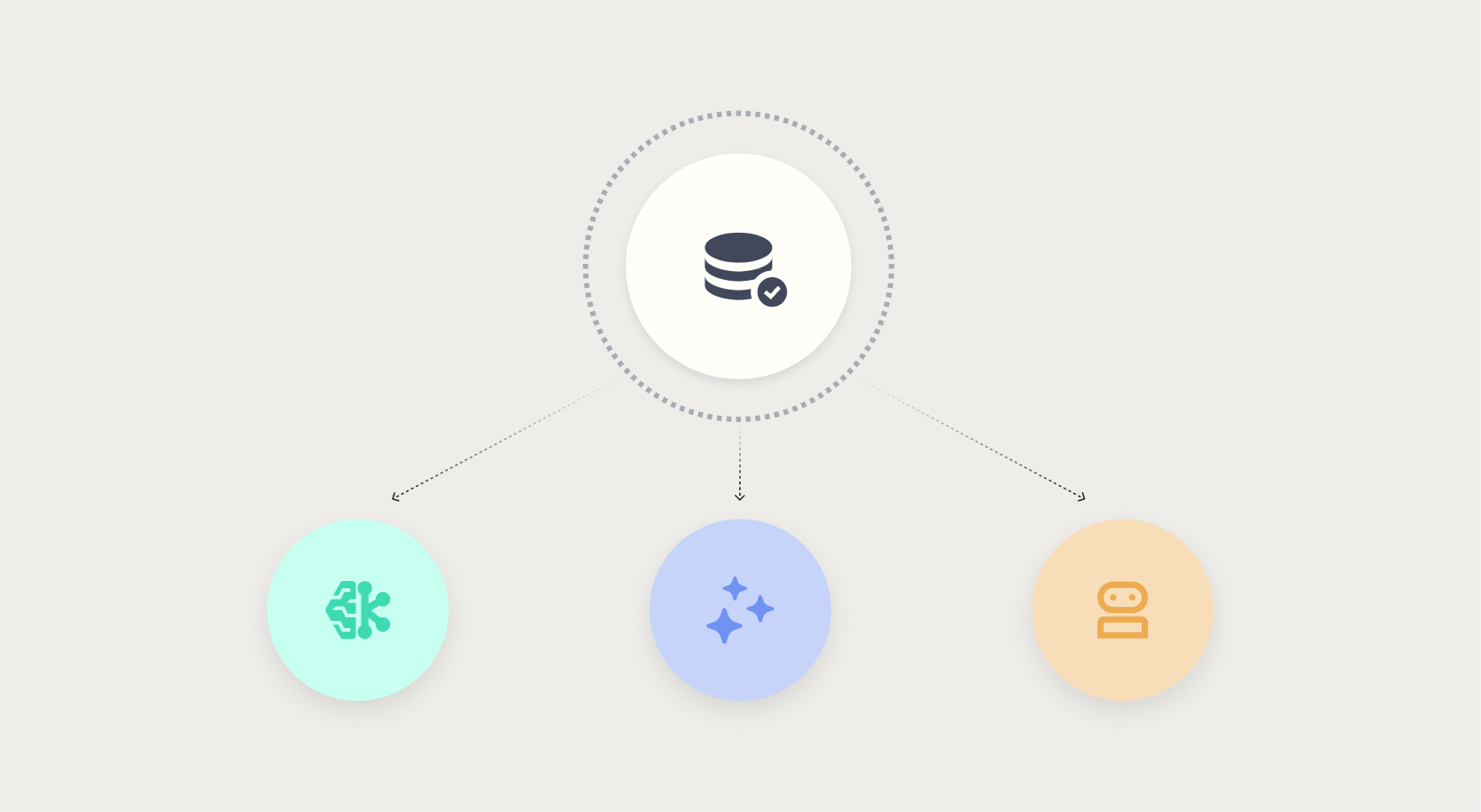

Generative AI and agentic AI break that containment. A large language model retrieving from a stale knowledge base produces a confident wrong answer with no error signal. An autonomous agent executing a multi-step workflow may commit funds, schedule shipments, or update records based on incomplete or outdated data. There is no dashboard screaming “this is wrong.” The model is doing exactly what it was asked to do — on garbage input.

“In traditional ML, data quality issues are like a leaky pipe — you see the puddle,” said Marlow. “In generative and agentic systems, the pipe runs behind the walls of automation. The puddle forms in a place nobody looks until the floor collapses.”

What This Means for Businesses Deploying Advanced AI

The implications are urgent. Any organization rolling out chatbots, autonomous agents, or AI-driven decision systems must treat data quality as a continuous operational discipline — not a one-time pre-training task. Data must be fit for the specific action the AI will take, not just for prediction accuracy.

- Generative AI requires real-time freshness checks. A knowledge base that is even 24 hours stale can cause reputation-damaging errors in customer-facing chatbots.

- Agentic AI needs guardrails on data sources. An autonomous procurement agent must validate supplier data before acting — or the company risks binding commitments on false premises.

- Monitoring must shift from model metrics (accuracy, drift) to outcome metrics: Did the agent’s action produce the intended result? Was the data it used correct at the moment of decision?

“We’re seeing organizations spend millions on model development and then skip the one thing that determines whether it works in the wild: data quality operations,” Marlow warned. “It’s like building a Formula 1 car and putting cheap tires on it. You’ll crash at the first turn.”

From Dashboard Alerts to Hidden Liability

The old relationship between machine learning and data quality was manageable: bad data caused visible errors, and someone hit the pause button. The new relationship — with generative and agentic systems — is one of silent escalation. The AI acts, the damage accrues, and no alarm sounds until the budget is overdrawn, a contract is breached, or a regulatory fine arrives.

Experts call for a shift in how enterprises think about data. Instead of data as a one-time asset improved during model training, it must be treated as a live liability that can poison any system using it at any time. Real-time data observability, automated freshness checks, and human-in-the-loop approval for high-stakes agent actions are no longer optional — they are table stakes.

“Every company deploying agentic AI right now is running a silent experiment on their own data hygiene,” said Marlow. “Some will discover they passed. Others will discover they failed when the legal team calls.”

— Breaking news report based on urgent industry findings.